We have the following setup, not working:

load balancer with SSL termination

public front

internal front

backend for apache front

backend for nginx

We got to the point where everything works except the most important; video playback. Videos can be uploaded, get converted, and you can play the favour previews. However, when you try to watch a video in the player, they do not play. It seems to be a CORS issue.

For that, we have tried several things:

- CORS settings in Haproxy, Apache and nginx. Individually, and collectively.

- Get SSL down to the Apache and Nginx, did not work either.

- Put the nginx with the front host. Did not work either.

It looks like it boils down to SSL issues between either Nginx and Apache or Nginx and Haproxy. Either way we see a problem clearly that we do not understand:

Setting up VOD_PACKAGER_HOST and

VOD_PACKAGER_PORT

I understand this is to set a machine that does the ‘heavy lifting’ for the transcoding and live streaming of video.

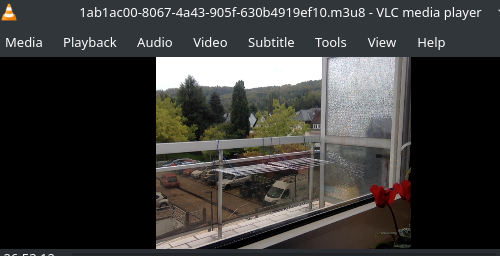

That is great, but, when a video is played it looks like the stream go from the front to that host over the Internet ( it is not a host to host internal request, but a public one).

So if you upload a video on a Kaltura front, it works, it transcodes, but then when playing it, it calls that host.

Now, lets say we have:

front1 and front2 in 10.0.2.10 and 11 respectively.

vod1 and vod2 ( or whatever) in 20 and 21.

If the public hostname , in the LB is:

And you setup intenally a vip, or to make it easier, vod1 internal ip as the VOD_PACKAGER_HOST, this will eventually be not good as when you play a video inside the player is going to go, for example, to vod1:8443. And of course it is not going to fly as the request will not reach it.

Now if you make the nginx host reachable on the Internet, for example through the same LB, you can send it to a different back-end and it should work, right?

It kind of does, if you try something ‘static’ like the nginx_status or vod_status. However, when the front calls a video in vod from the player frame, it does not load, it falls into the CORS pit, and we see different messages in LB, Apache and Nginx ( I will put them below).

So in LB we see a 200 ( the frame loads ), in Apache a big error ( the resource does not load ), and in nginx an SSL handshake error to upstream.

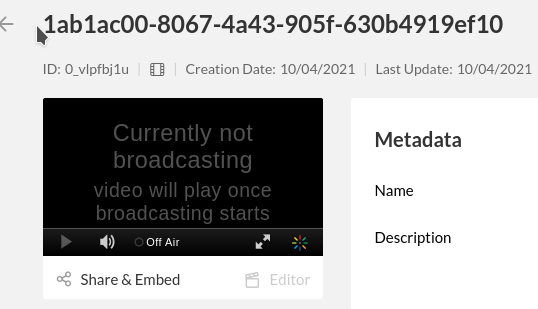

Something intriguing is also this: it is NOT clear 100% to us what call the VOD_PACKAGER_HOST and where it is configured from.

the ANS file, when called, seems to be the one that sets

local.ini in the configurations directory. However, once set, it does not change in the database ( I would expect that should be changeable easily ).

instead, even after doing the corresponding changes in ANS files, for posterity and in the local.ini where the vod string is set, the only way to make an effective change on the way the application behaves ( what it uses when hitting play in a video in the video player ), seems to be by DIRECTLY editing the value in the DB, in the delivery_profile table.

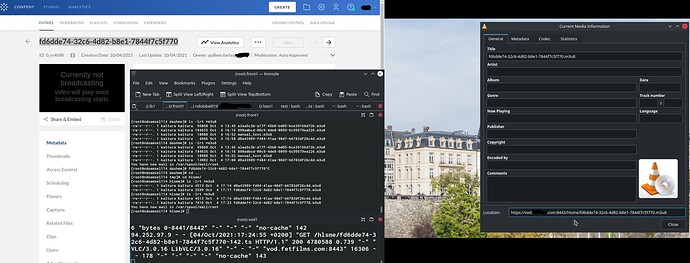

The output we get right now:

The LB:

Oct 1 16:31:26 localhost haproxy[111550]: 94.252.97.9:46308 [01/Oct/2021:16:31:26.595] public~ dynamic/dynsrv1 0/0/0/185/185 200 913 - - --VN 1/1/0/0/0 0/0 {} {} "GET /p/102/sp/10200/playManifest/entryId/0_56yy00s5/flavorIds/0_swut18ka,0_f6tewc72,0_xlyn2tmi,0_wt1bf91a,0_bk0k2xk8/format/mpegdash/protocol/https/a.mpd?referrer=aHR0cHM6Ly9tZWRpYS5mZXRmaWxtcy5jb20=&ks=MTk1YzhjMDk4ZDM5NjhjNDQzNWFhNDk1YTM4MDZkZmJkMmJkYmQ4ZHwxMDI7MTAyOzE2MzMxMzAwOTA7MjsxNjMzMDQzNjkwLjU4MjtndWlsbGVtLmxpYXJ0ZUBhbWMubHU7ZGlzYWJsZWVudGl0bGVtZW50LGFwcGlkOmttYzs7&playSessionId=33b7953b-dc95-efb0-2717-09b1236f3823&clientTag=html5:v2.85&uiConfId=23448173&responseFormat=jsonp&callback=jQuery11110458314859665804_1633098649674&_=1633098649675 HTTP/1.1"

Play request is sent to dynamic ( the front running Apache).

10.0.2.7 - - [01/Oct/2021:16:35:13 +0200] “GET /p/102/sp/10200/playManifest/entryId/0_56yy00s5/flavorIds/0_swut18ka,0_f6tewc72,0_xlyn2tmi,0_wt1bf91a,0_bk0k2xk8/format/mpegdash/protocol/https/a.mpd?referrer=aHR0cHM6Ly9tZWRpYS5mZXRmaWxtcy5jb20=&ks=MTk1YzhjMDk4ZDM5NjhjNDQzNWFhNDk1YTM4MDZkZmJkMmJkYmQ4ZHwxMDI7MTAyOzE2MzMxMzAwOTA7MjsxNjMzMDQzNjkwLjU4MjtndWlsbGVtLmxpYXJ0ZUBhbWMubHU7ZGlzYWJsZWVudGl0bGVtZW50LGFwcGlkOmttYzs7&playSessionId=542b3a22-9583-143a-ba46-46cca1f8b5ad&clientTag=html5:v2.85&uiConfId=23448173&responseFormat=jsonp&callback=jQuery1111025388460390376655_1633098903176&=1633098903177 HTTP/1.1" 200 915 0/177076 “xxxxxx.com - xxx sex videos free hd porn Resources and Information.” “Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/93.0.4577.82 Safari/537.36” “-” 10.0.2.7 “-” “media.xxxxx.com” 30193 1105483492, 1633098913 - 1339 “-” “94.252.97.9” “-” “-” “no-store, no-cache, must-revalidate, post-check=0, pre-check=0” -

[errorMessage] => pid : 102 | uiconfId : 23448173 | referrer : https://media.xxxxx.com | didSeek : false | resourceUrl : https://media.xxxxx.com:443/p/102/sp/10200/playManifest/entryId/0_56yy00s5/flavorIds/0_swut18ka,0_f6tewc72,0_xlyn2tmi,0_wt1bf91a,0_bk0k2xk8/format/mpegdash/protocol/https/a.mpd?referrer=aHR0cHM6Ly9tZWRpYS5mZXRmaWxtcy5jb20=&ks=MTk1YzhjMDk4ZDM5NjhjNDQzNWFhNDk1YTM4MDZkZmJkMmJkYmQ4ZHwxMDI7MTAyOzE2MzMxMzAwOTA7MjsxNjMzMDQzNjkwLjU4MjtndWlsbGVtLmxpYXJ0ZUBhbWMubHU7ZGlzYWJsZWVudGl0bGVtZW50LGFwcGlkOmttYzs7&playSessionId=542b3a22-9583-143a-ba46-46cca1f8b5ad&clientTag=html5:v2.85&uiConfId=23448173 | userAgent : Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/93.0.4577.82 Safari/537.36 | playerCurrentTime : 0 | playerLib : Native | streamerType : mpegdash | message : {“severity”:2,“category”:1,“code”:1002,“data”:["xxxxxx.com - xxx sex videos free hd porn Resources and Information.,swut18ka,f6tewc72,xlyn2tmi,wt1bf91a,bk0k2xk8,/forceproxy/true/name/a.mp4.urlset/manifest.mpd”],“handled”:false} | code : 1000 | key : 1000 |

[1] => pid : 102 | uiconfId : 23448173 | referrer : https://media.xxxxx.com | didSeek : false | resourceUrl : xxxxxx.com - xxx sex videos free hd porn Resources and Information. | userAgent : Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/93.0.4577.82 Safari/537.36 | playerCurrentTime : 0 | playerLib : Native | streamerType : mpegdash | message : {“severity”:2,“category”:1,“code”:1002,“data”:[“https://vod.xxxx.com/dash/p/102/sp/10200/serveFlavor/entryId/0_56yy00s5/v/2/ev/6/flavorId/0_,swut18ka,f6tewc72,xlyn2tmi,wt1bf91a,bk0k2xk8,/forceproxy/true/name/a.mp4.urlset/manifest.mpd"],"handled”:false} | code : 1000 | key : 1000

The front gets the request from the LB and tries to send it to the VOD ( instead of internally, publicly (??) ), and fails.

2021/10/01 16:40:32 [error] 21648#21648: *36 SSL_do_handshake() failed (SSL: error:140770FC:SSL routines:SSL23_GET_SERVER_HELLO:unknown protocol) while SSL handshaking to upstream, client: 10.0.2.7, server: _, request: “GET /hls/p/102/sp/10200/serveFlavor/entryId/0_56yy00s5/v/2/ev/6/flavorId/0_wt1bf91a/name/a.mp4/index.m3u8 HTTP/1.1”, subrequest: “/kalapi_proxy/hls/p/102/sp/10200/serveFlavor/entryId/0_56yy00s5/v/2/ev/6/flavorId/0_wt1bf91a/name/a.mp4”, upstream: “https://10.0.2.7:80/hls/p/102/sp/10200/serveFlavor/entryId/0_56yy00s5/v/2/ev/6/flavorId/0_wt1bf91a/name/a.mp4?pathOnly=1”, host: “vod.xxxxx.com”, referrer: “https://media.xxxx.com/”

2021/10/01 16:40:32 [error] 21648#21648: *36 open() “/etc/nginx/html/50x.html” failed (2: No such file or directory), client: 10.0.2.7, server: _, request: “GET /hls/p/102/sp/10200/serveFlavor/entryId/0_56yy00s5/v/2/ev/6/flavorId/0_wt1bf91a/name/a.mp4/index.m3u8 HTTP/1.1”, host: “vod.xxxxx.com”, referrer: “https://media.xxxxx.com/”

So, when clicking the play button in the player ( which renders perfectly ), from the LB, as it is the one that takes the response to a valid request, as both media and vod use the same entry point, handles it and sends it to the nginx vod, but fails with an SSL issue.

We tried nginx with and without SSL configured, with SSL terminating in the LB. The same wildcard certificate is used. That works perfectly in the Apache front, but not so on the vod.

As general questions I would ask:

- Is there a place where I can read about how the VOD packager is meant set up, in terms of networking?

- CAN the packager be behind a load balance? if so, what is the correct configuration? in the instructions this is NOT explained here: https://github.com/kaltura/platform-install-packages/blob/Propus-16.15.0/doc/rpm-cluster-deployment-instructions.md#nginx-vod-server

- Is the VOD being called over the internet the correct behaviour? Why putting it behind an haproxy does not work?

Where is the documentation for all this?

Can someone give us hand with some input or documentation?

Thanks!